entertAIn play

Emotion detection brings a new depth of immersion into games. The entertAIn play plugin enables game engines to use emotions as input.

Emotion Detection from the Voice

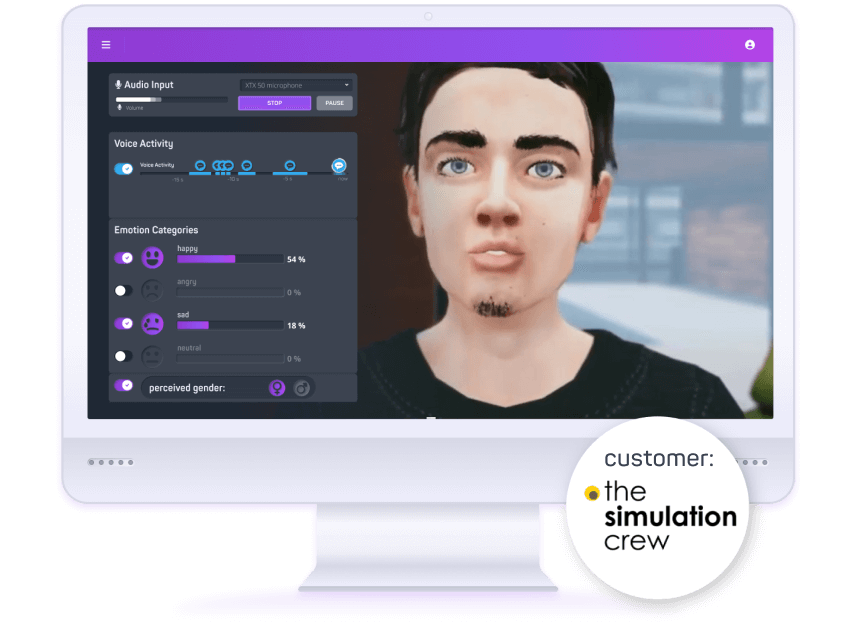

Bring immersion to the next level. entertAIn play detects your players‘ emotions from voice. Realtime analysis gives you the possibility to implement novel gameplay scenarios.

entertAIn play provides you with 4 basic emotions. Available on any platform, from a VR headset to a casual mobile phone.

A new Depth of Immersion

Emotion detection brings unchallenged depth of immersion into your games. Using embedded microphones is a non-invasive way to capture your players’ feelings. entertAIn play performs excellent on mobile VR as well as stand-alone HMDs.

How entertAIn works:

1. Listen to the Player

Voice Activity Detection (VAD) automatically captures your player’s voice through microphone.

How entertAIn works:

2. Detect the Emotion

The entertAIn play AI models analyze over 6,000 voice features. They identify the players’ emotions and their intensity in realtime.

How entertAIn works:

3. Trigger Interaction

Based on the output values, you can now trigger events in the game fitted to the players’ moods.

By loading the video, you agree to YouTube's privacy policy.

Learn more

Drag and Drop:

3 Minute Integration

entertAIn play is lightweight and easy to integrate into any game. The plugin is ready to use in a few minutes. Watch our tutorial on the installation.

EMOTION AI

4 default emotions.

Infinitely customizable.

LIGHTWEIGHT

Minimal requirements. Perfomance on any platform.

REALTIME

Human like interaction speed.

Adaptable reaction time.

6 Default Emotions, Infinite Possibilities

Below you can find a list of the key features of entertAIn play. You can also download our factsheet as PDF.

- Emotion Categories: Happy, Angry, Sad, Neutral

- Emotion Dimensions: Expression, Inclination

- Voice Activity Detection: Only captures human voice

- Speaker Verification: Only captures the player‘s voice

- Speaker Attributes: Gender, Age

- XR-Ready: suitable for VR & AR applications

- Lightweight package: as low as 13MB only

- Multi-platform: Windows, MacOS, iOS, Android

- Memory Usage: as low as 10MB of memory

- Negligible CPU Usage: real-time factor under 1

- Game Engine: Unity, Unreal

the simulation crew

“It helped us add a new layer of emotional interaction to our scenarios in VR.”

Eric Jutten,

CEO

Success story for The Simulation Crew

“Emotions are an integral part of our interactions.” says Eric Jutten, CEO of the dutch company The Simulation Crew. To ensure their VR trainer, Iva, is emotionally capable, they looked for a solution. entertAIn play helped them to integrate emotion AI in their product. Read the complete story.

Customers, Projects & Success stories

entertAIn observe for Playtesting

How does your player feel about your game? entertAIn observe gives you insight into your players’ emotions.

devAIce® Emotion and Scene Detection

Learn more about our devAIce®. audEERING’s lightweight technology for emotion detection, scene detection and many other purposes.